Today, the world of global logistics would be nothing without the shipping container. The simple steel box revolutionized how goods are moved between continents and across oceans. Despite its simplicity, it's one of the most significant inventions ever — it also makes a great metaphor for the state of modern, global digital mapmaking.

Back in December, on his blog Map Happenings, James Killick — formerly of Etak, Apple and Esri, among others — made the case that the global mapmaking industry is stuck in the 1950s, in a time before shipping containers. And that the industry is in desperate need of its “shipping container moment.”

If TomTom’s recent announcements are anything to go by, that moment might not be far away.

The announcements: What's happening?

At the end of last year, TomTom announced its role as a founding member in the Overture Foundation, an open-data project that’s aiming to build “reliable, easy-to-use and interoperable open map data.”

The Overture Foundation is aiming to standardize key aspects of the location data and mapmaking industry to make working with location data a whole lot easier. At its core is an interoperable base map. Alongside this is a global entity reference system and a structured data schema. These aim to simplify interoperability by linking entities from different data sets to the same real-world entities and by defining and driving adoption of a common and documented data structure. Essentially, it ensures everyone knows what everyone else is talking about when they say or do something.

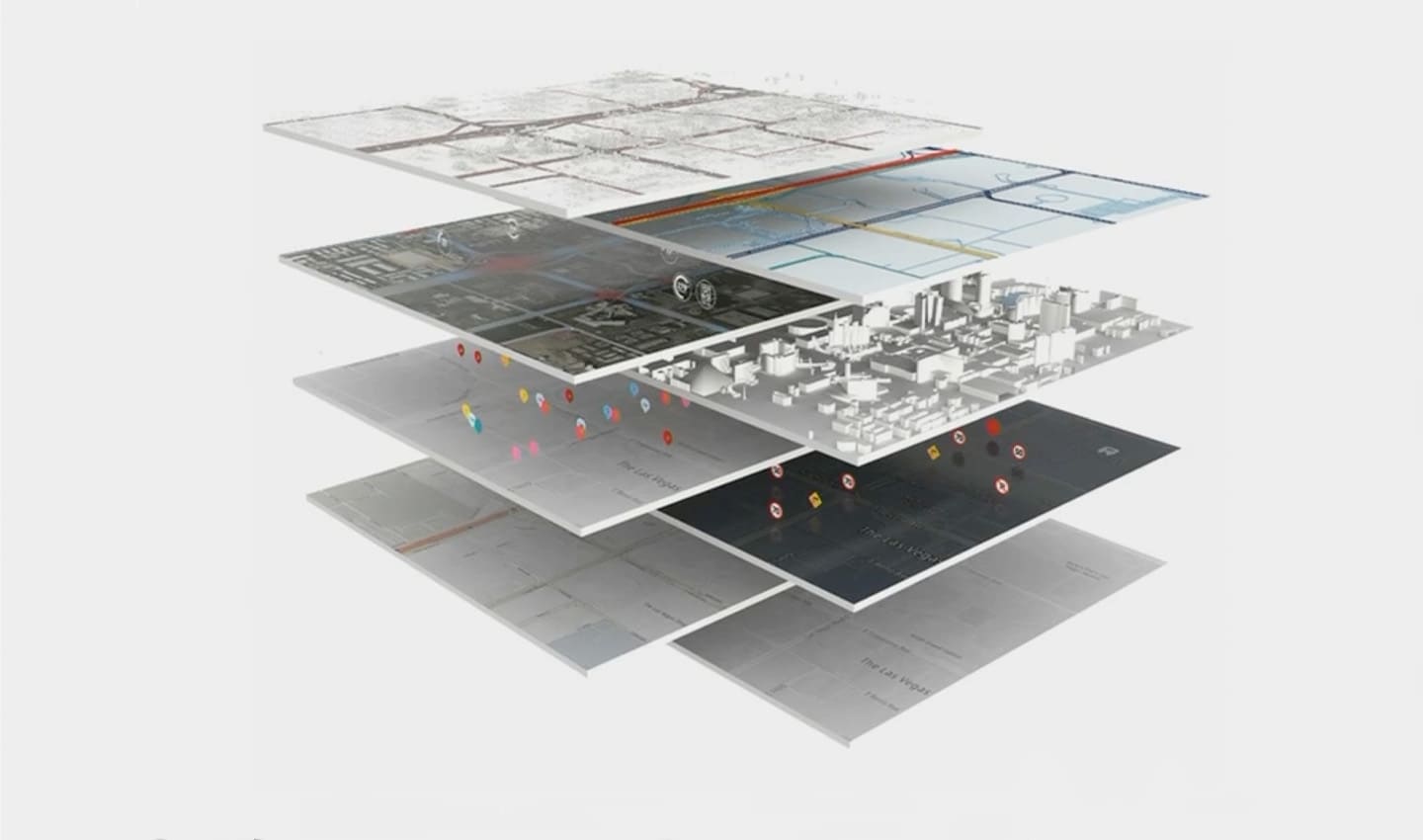

Modern digital maps are complicated. They're made up of masses of data and can be customized based on what they're needed for. One way to think of them is in a series of layers, at the most foundational level is the base map, on top of this other data about traffic, visualizations, routing, POIs and so on, can be projected.

Modern digital maps are complicated. They're made up of masses of data and can be customized based on what they're needed for. One way to think of them is in a series of layers, at the most foundational level is the base map, on top of this other data about traffic, visualizations, routing, POIs and so on, can be projected.In November, TomTom lifted the lid on its new Orbis Maps, base map and collaborative location data ecosystem. It’s the company’s new approach to mapmaking and location-based services, which it says will power the “world’s smartest map” — a map that’s richer, more detailed and fresher than ever before. Iteration will be faster and feedback loops shorter, Yu Guo, TomTom VP Engineering said in a recent Q&A.

According to the mapmaking company, these two technologies will combine to power the next generation of commercial location-based tech. And do so in a way that could lead us to the industry’s “containerization moment.”

Disparate data difficulties

The location tech industry has battled mountains of disparate data and systems that don’t work together out-of-the-box for years.

As Killick explains, developers at location tech organizations must spend an inordinate amount of time (and money) making sense of location data, rather than building with it. They must:

Understand what’s contained within source data and how it’s organized.

Examine target systems and understand their data requirements.

Organize data for consistent output on various systems that all use different standards.

And finally, they must find a way of doing this repeatedly to keep maps up to date.

When building location tech, engineers must gather geospatial data from hundreds if not thousands of different places, from sensors to open data to government organizations. With no industry standards, each source maintains its data in different formats and structures. Even when sources report the same information about the world, there’s no guarantee they’ll follow the same conventions.

Gathering all that information, and compiling it, is the obvious challenge, but engineers must also consolidate its differences and make it all work together. Imagine the headache engineers face when building navigation for a car when the road network of its base map doesn’t align to the road network referenced by the routing engine. Or imagine an on-demand food delivery company trying to deliver to an area that’s been redeveloped, their map showing the new buildings but not the new addresses or entry points, because they use different sources that don’t update together.

“Willem Strijbosch

VP of Product for Maps

“It's all pretty horrible,” Killick says. But it doesn’t have to be — shipping containers might have the answer.

How the shipping container changed the world

In 1956, Malcolm McLean, a businessman from California, invented the standardized shipping container. As the owner of a trucking business, he knew the pains of transporting non-standard cargo, which could be sacks one day and wooden crates the next.

Malcolm McLean overlooking Port Newark in 1957, just one year after releasing his shipping container invention. Already, ports were full of them!

Malcolm McLean overlooking Port Newark in 1957, just one year after releasing his shipping container invention. Already, ports were full of them!With McLean’s invention, cargo would be loaded into containers and then be transported and loaded on to standardized ships, using standardized machinery. Prices were standardized too. The shipping container made moving cargo a whole lot easier, but that was only part of its brilliance.

McLean made the patents on his invention available through a royalty-free lease to the International Organization for Standardization (ISO). Ship builders, port operators, logistics companies and anyone else interested could use the designs to make boats, trucks, equipment and tools that worked with containers. Soon, everyone was using shipping containers to move goods and the efficiency of global logistics went through the roof while the cost to move goods long distances tumbled.

What does this have to do with global mapmaking and location tech?

Most of what geolocation tech companies do is akin to loading and unloading non-standard cargo from their non-standard ships to non-standard ports. They must analyze every piece of cargo (think geospatial data). They must understand its destination, where and how it needs to be stored, manipulated and shared. They must make sure it fits in the system of their ship and that what comes out makes sense and isn’t lost or damaged along the way.

“Willem Strijbosch

VP of Product for Maps

The Overture Foundation and TomTom’s new Orbis Maps could be offering solutions to this problem.

If we see companies building with location data as cargo ships, then the Overture Foundation becomes the shipping container standard. In an abstract sense, Overture is standardizing how location data and digital maps can be transported and moved from system to system (or ship to ship). And importantly, it’s doing it like McLean did: in an open fashion that anyone can adopt or start working with.

In this metaphor, TomTom’s Orbis Maps and Ecosystem become the tools that move shipping containers of data cargo between ports, ships and destinations. They organize them when they’re on the ship. It could even build the ships that cargo (data) moves on. Naturally, TomTom also ensures the cargo reaches its destination as intended, so its output remains valuable.

There’s some intricacy to the metaphor, though. TomTom also has its own highly valuable, proprietary location data and services. These too will be containerized so they are compatible with other data and systems in the ecosystem. Location tech companies that want to carry this kind of more specialist cargo will have to license it. Even so, they can do so in the knowledge it’s ready to work with and will fit perfectly on their ship with no extra work required, only value to add.

This all comes together to create a system which puts ship operators (or location tech companies) leaps ahead of where they’d be if they had to do and build everything themselves. They’re not wasting time loading random cargo onto their ship and putting it in place and making it work. They’re getting it on with ease and getting down to building their core system and moving their ship to its destination.

A change of tide

There’s no way of getting around it, the lack of interoperability in geospatial data is costing the industry millions, if not billions, of dollars a year, Laurens Feenstra, VP of Product Management at TomTom, says. Mapmaking and location tech is already an expensive and complicated game, but normalizing non-standard data only makes things more costly, for no real reason. Much like shipping before the container existed.

If geospatial data was organized with more structure and interoperability, around an openly communicated and shared global standard — if it had its shipping container moment — location tech companies would be able to move data into their products with greater ease and simplicity. Engineers would be freed up to work on their core products, rather than data normalization. The whole industry and end users would benefit from better and lower cost location products and services.

The shipping container has done more for globalization than all trade agreements of the past 50 years, combined, The Economist writes. Countries that used shipping containers experienced three figure growth in bilateral trade in the five years after they started using them and shipping costs dropped by up to 90 percent. Through standardization and interoperability, shipping got easier, and trade boomed.

Location tech developers could experience similar gains with Overture and TomTom Orbis Maps. Engineers could skip the first 20 places on the game board if location data was interoperable and standardized. Innovation, like trade in the world of shipping containers, would bloom and flourish.

It’s early days for Overture and the new TomTom Orbis Maps, but hopefully in decades to come, we’ll look back and see their launch as mapping’s “shipping container moment” and wonder how we ever managed.

If you want to learn more about how the Overture Foundation and TomTom are going to change mapping, take a look at the Overture website here and this article about TomTom’s new Orbis Maps.

People also read

)

The world needs a better map: TomTom is making it with its new Orbis Maps and ecosystem

)

TomTom, Amazon Web Services, Meta and Microsoft join forces and launch open map data foundation

)

A brief history of the cruise control system in your car

)

Cars of the future will be defined by their software

* Required field. By submitting your contact details to TomTom, you agree that we can contact you about marketing offers, newsletters, or to invite you to webinars and events. We could further personalize the content that you receive via cookies. You can unsubscribe at any time by the link included in our emails. Review our privacy policy. You can also browse our newsletter archive here.

)